A new academic study has uncovered fundamental weaknesses in Microsoft’s PhotoDNA system, showing it can be bypassed, manipulated to produce false matches, and even partially reversed to reveal image content.

The findings raise serious concerns about the reliability of a technology widely used to justify large-scale scanning of user content.

The paper by Ghent University and KU Leuven researchers, presents the first “white-box” analysis of PhotoDNA, effectively reverse-engineering its internal workings. The team developed what they call “Alleged PhotoDNA,” a function that produces identical hashes to the original system across large datasets, allowing them to systematically analyze its behavior and weaknesses.

Their work builds on years of limited black-box testing and was coordinated with Microsoft through a responsible disclosure process. Microsoft confirmed that at least some of the demonstrated attacks affect the currently deployed version of PhotoDNA, though specific details were withheld for safety reasons.

PhotoDNA, introduced in 2009, is the industry-standard perceptual hashing system used to detect known child sexual abuse material (CSAM). It is deployed by major platforms and forms the backbone of databases maintained by organizations like the U.S. National Center for Missing & Exploited Children (NCMEC), which are widely shared across the tech industry. The system works by converting images into hash values that can be compared against known illegal content, even after minor edits.

However, the new research challenges core assumptions about the system’s reliability.

The researchers found that PhotoDNA’s internal structure is mathematically predictable, relying on piecewise-linear and differentiable operations. Critically, the hash depends largely on simple pixel value sums rather than complex visual features, making it easier to manipulate than previously believed.

Using this knowledge, the team demonstrated several practical attacks:

- Exact collisions: Two completely different images can be modified to produce identical hashes.

- False positives: Attackers can craft benign images that match hashes of illegal content, potentially incriminating innocent users.

- Detection evasion: Illicit images can be subtly altered to avoid detection while remaining visually unchanged.

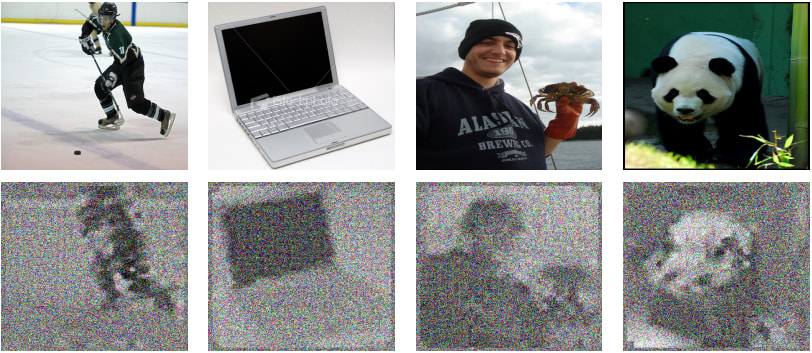

- Preimage reconstruction: It is possible to recover recognizable shapes and composition from a hash value, contradicting claims that PhotoDNA is irreversible.

These attacks are highly efficient. In many cases, they succeed in seconds or minutes on a standard laptop, with success rates close to 100%.

Privacy implications

While PhotoDNA has long been promoted as a privacy-preserving tool, since it compares hashes rather than analyzing images directly, the study suggests that this tradeoff may not hold.

The ability to reconstruct visual information from hashes undermines claims that the system does not leak sensitive data. At the same time, the existence of reliable false positives and evasion techniques calls into question its effectiveness as a detection tool.

This becomes especially significant in the context of client-side scanning (CSS), where PhotoDNA-like systems are proposed to run directly on user devices. Such approaches would require storing large databases of hashes locally and scanning billions of private images.

According to the researchers, this creates a worst-case scenario, where users’ private content is systematically analyzed, yet the detection mechanism itself can be bypassed or abused. The paper explicitly warns that false positives and information leakage are “particularly problematic” in such deployments.

The findings arrive amid ongoing legislative debates in the EU, U.S., and elsewhere over mandatory CSAM detection measures, including proposals that could weaken or bypass end-to-end encryption.

Many of these proposals implicitly rely on PhotoDNA or similar perceptual hashing technologies as a technical foundation. This study suggests that foundation may be far less robust than assumed.

Leave a Reply