Google Threat Intelligence Group (GTIG) says it has identified what it believes is the first known case of cybercriminals using artificial intelligence to help develop a zero-day exploit intended for mass exploitation.

According to Google, the exploit targeted a popular open-source web-based system administration platform and allowed attackers to bypass two-factor authentication (2FA) under certain conditions. The company says it worked with the affected vendor to responsibly disclose the flaw before it could be widely abused.

GTIG did not name the platform or the cybercriminals involved, but said the exploit was implemented as a Python script designed to bypass 2FA after valid credentials had already been obtained.

Researchers say they have “high confidence” that an AI model assisted in both discovering and weaponizing the vulnerability, even though Gemini itself was not believed to have been used.

Google based that assessment on multiple indicators within the exploit code, including extensive educational-style docstrings, unusually structured “textbook” Python formatting, detailed help menus, and even a hallucinated CVSS severity score embedded in the script.

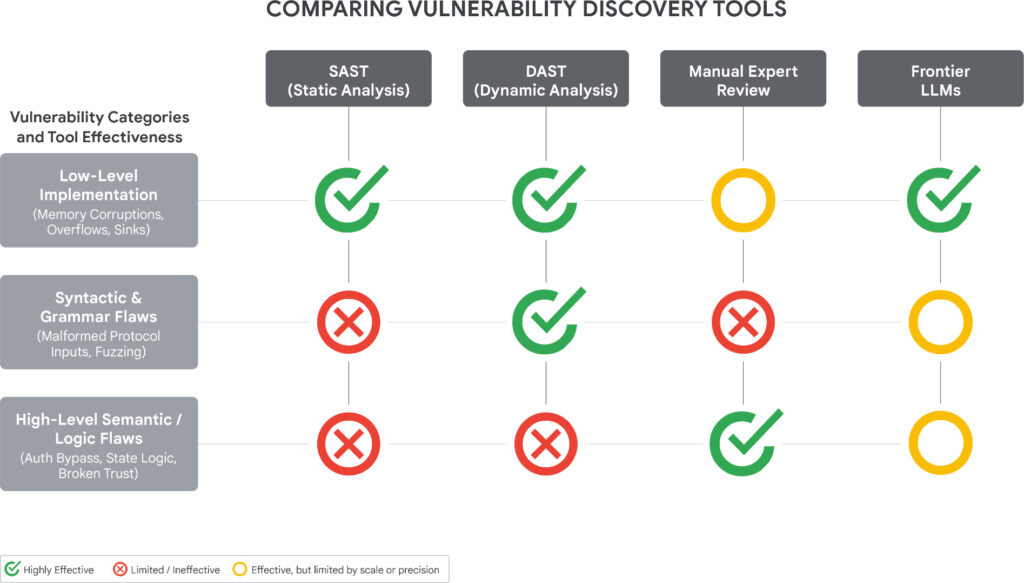

Unlike traditional bugs discovered through fuzzing or static analysis, this issue stemmed from a semantic flaw in the application's authentication flow, caused by a hardcoded trust assumption. Google says large language models are increasingly capable of reasoning about developer intent and spotting security logic contradictions that automated scanners often miss.

The report also highlights growing interest from state-backed hackers in AI-assisted vulnerability research. Google observed China- and North Korea-linked threat actors using AI models for reverse engineering, exploit validation, and vulnerability analysis, including large-scale automated CVE research and testing inside controlled lab environments.

Beyond exploit development, GTIG says threat actors are increasingly using AI to automate malware development, reconnaissance, and attack operations. The report also documents attempts to industrialize the abuse of commercial AI platforms through automated account-creation systems, proxy relays, and account-pooling services designed to evade provider restrictions.

Google says it continues to use AI internally for defensive purposes, including vulnerability discovery and automated remediation efforts to identify and fix software flaws before attackers can exploit them.

Leave a Reply