ExpressVPN has announced the rollout of ExpressAI, a browser-based artificial intelligence platform designed to process user interactions in cryptographically isolated environments, aiming to eliminate provider access to prompts, files, and conversation history.

The VPN company positions ExpressAI as a response to growing concerns around how mainstream AI services log, store, and reuse user-submitted data. The platform has been in development with a focus on ensuring that neither ExpressVPN nor third-party model providers can access user inputs.

At the core of ExpressAI is a confidential computing architecture, where each interaction is processed inside a secure enclave. In this setup, encryption keys are generated and maintained within hardware, preventing external systems, including cloud infrastructure operators, from accessing decrypted data. This approach aims to create what the company describes as “cryptographic isolation,” effectively sealing conversations from external visibility.

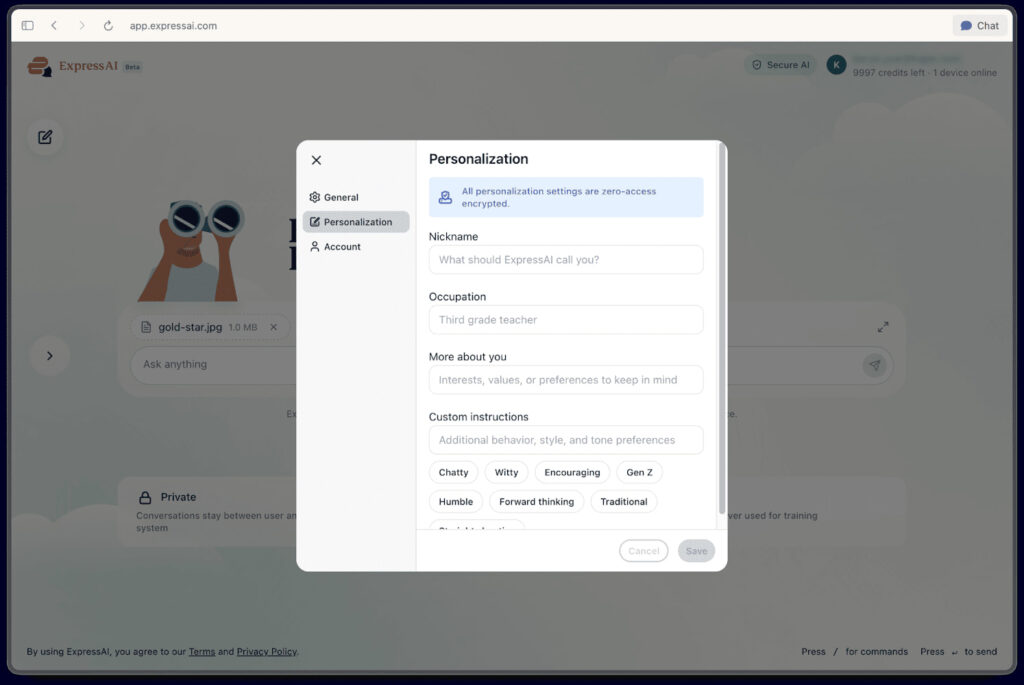

ExpressAI also incorporates zero-access encryption for stored data, including conversation histories and uploaded files. Users can optionally enable a “Ghost Mode,” which automatically deletes conversations after use. ExpressVPN states that no prompts or files are used for AI model training, and no human review of user data takes place.

ExpressVPN

The product has undergone an independent security audit conducted by Cure53 between February and March 2026. The assessment included penetration testing and a review of frontend and backend components, cryptographic implementations, and key management systems. Cure53 reported that the platform’s architecture aligns with its stated privacy goals, noting that user interactions are handled within isolated computing environments. The firm added that identified vulnerabilities were addressed prior to launch.

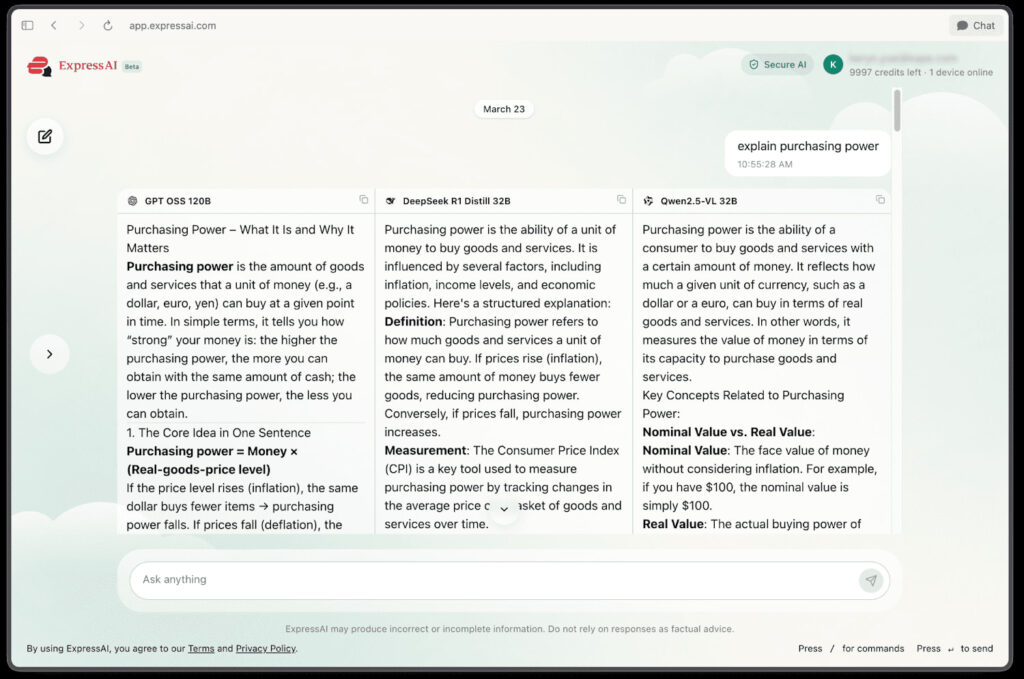

From a functionality standpoint, ExpressAI aggregates multiple open-weight AI models into a single interface. These include GPT OSS 120B for general-purpose tasks, DeepSeek R1 Distill 32B for reasoning-heavy queries, Qwen2.5-VL for image and document analysis, Qwen3.5 for coding and multilingual tasks, and NVIDIA’s Nemotron 12B for technical workloads. A built-in web search feature allows models to retrieve up-to-date information during queries.

The platform operates on a credit-based usage system, offering 500 daily credits, with each model query consuming one credit. Users are also provided with 2GB of encrypted storage and a 50MB per-file upload limit.

Other than this and Duck Ai, what else do we have? Any more private AI bot?