A financially motivated, Russian-speaking threat actor used commercial generative AI services to compromise more than 600 FortiGate devices across 55 countries.

Rather than exploiting zero-day flaws, the campaign relied on exposed management interfaces and weak credentials, with AI acting as a force multiplier that enabled large-scale, parallel intrusions.

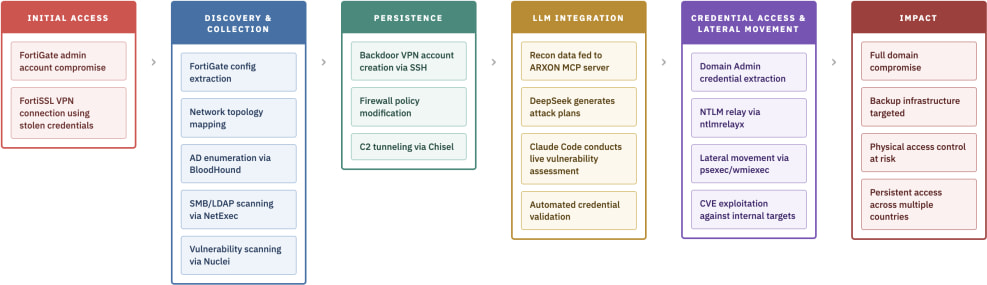

The activity was observed between January 11 and February 18, 2026, by AWS researchers, who reported that the attackers leveraged multiple commercial large language model (LLM) providers throughout the intrusion lifecycle. In a parallel investigation published by an independent Japanese researcher, an exposed server containing over 1,400 operational files revealed the inner workings of what appears to be the same AI-augmented campaign. The infrastructure exposed stolen FortiGate configurations, Active Directory data, credential dumps, exploit code, and AI-generated attack plans.

FortiGate appliances, developed by cybersecurity vendor Fortinet, are widely used enterprise firewalls that often serve as VPN gateways and perimeter security devices for businesses, telecom providers, industrial firms, and managed service providers worldwide. Because they store VPN credentials, administrative passwords, and network topology data, they represent high-value targets when misconfigured or exposed to the internet.

According to Amazon, the attacker did not exploit new FortiGate vulnerabilities. Instead, they systematically scanned for internet-exposed management ports (443, 8443, 10443, 4443) and performed credential-based logins using commonly reused passwords. Once authenticated, they extracted full configuration backups containing SSL-VPN user credentials, LDAP bind accounts, IPsec VPN settings, and detailed routing information.

The Hunt.io investigation provides granular insight into post-access activity. In one documented intrusion targeting an industrial gas company in the Asia-Pacific region, the attacker used a read-only “Technical_support” admin account to pull a full FortiGate-40F configuration. Two Python scripts tied to CVE-2019-6693 were likely used to decrypt Fortinet’s ENC-formatted passwords, yielding valid Active Directory credentials.

From there, the actor pivoted internally via VPN, conducting reconnaissance and feeding results into a custom Model Context Protocol (MCP) server named ARXON. This Python-based component interfaced with commercial LLMs, including DeepSeek and Claude, to generate step-by-step attack plans, prioritize targets, and produce vulnerability assessments during live intrusions. A companion Go-based orchestrator dubbed CHECKER2 automated parallel VPN connections and scanning across thousands of stolen configurations.

cyberandramen.net

Operational logs show that one deployment processed 2,516 targets across 106 countries in containerized batches. Each workflow ingested a FortiGate configuration, attempted VPN access, mapped internal networks, and passed the results to ARXON for AI-driven analysis. The exposed server also contained folders labeled “claude” and “claude-0” with task outputs and cached prompts, as well as a .claude settings file that pre-approved autonomous execution of Impacket tools (secretsdump.py, psexec.py, wmiexec.py), Metasploit modules, and hashcat using hardcoded domain credentials.

In the post-exploitation phase, the actor deployed open-source tools such as gogo for port scanning, Nuclei for HTTP vulnerability discovery, BloodHound for AD mapping, and Impacket for NTLM relay and DCSync attacks. In confirmed cases, they extracted full NTLM hash databases from domain controllers and attempted lateral movement using pass-the-hash and pass-the-ticket techniques. Backup infrastructure, particularly Veeam Backup & Replication servers, was specifically targeted using credential extraction scripts and known vulnerability checks, behavior consistent with pre-ransomware staging.

Both reports highlight the actor’s technical limitations. When encountering patched systems, closed ports, or hardened environments, the attacker frequently failed and moved on. Their tooling showed hallmarks of AI-generated code, including redundant comments, fragile parsing logic, and simplistic architecture. Amazon assesses the operator as having low-to-medium baseline skills, heavily augmented by AI across planning, scripting, and reporting tasks.

Leave a Reply