A critical flaw in Anthropic’s “Claude in Chrome” browser extension allows any Chrome extension, even one with zero permissions, to hijack Claude’s AI capabilities and perform sensitive actions on behalf of users.

The issue, discovered by LayerX and dubbed “ClaudeBleed,” could enable attackers to steal emails, access private GitHub repositories, exfiltrate Google Drive files, and manipulate Claude into executing browser actions without meaningful user consent.

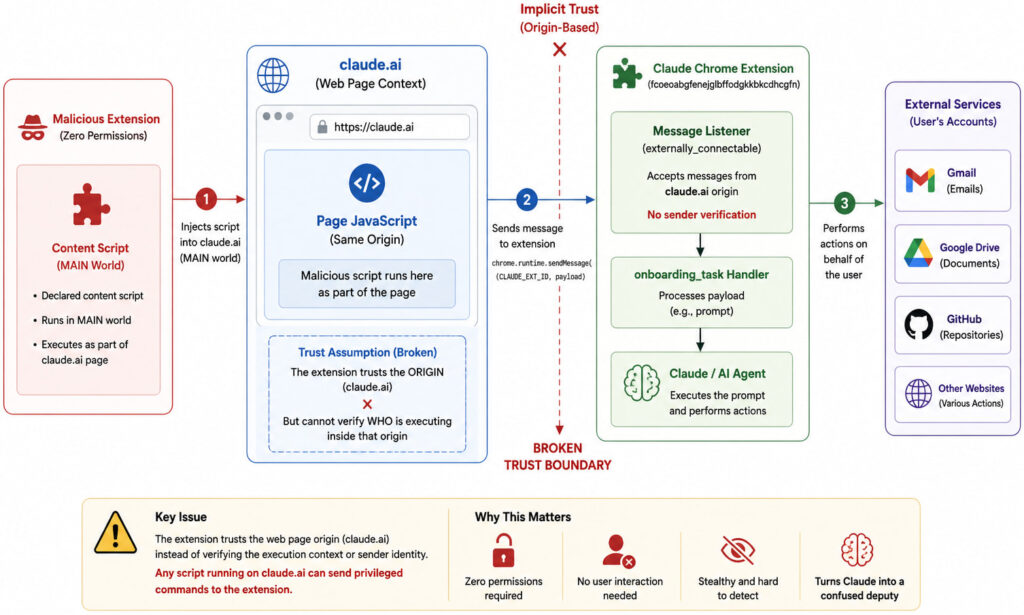

LayerX researcher Aviad Gispan says the flaw stems from a trust boundary failure in how the extension handles communication between scripts running on claude.ai and the extension itself.

Anthropic, the AI company behind the Claude chatbot family, released the “Claude in Chrome” extension in April to give users AI-powered browsing assistance and automation features. The extension currently has more than 7 million downloads on the Chrome Web Store.

The issue originates from Chrome’s externally_connectable feature, which allows websites or extensions to communicate with browser extensions. The Claude extension trusted any script executing under the claude.ai origin but failed to verify whether the script was actually from Anthropic or had been injected by another extension.

LayerX

Researchers demonstrated that a malicious extension with no declared permissions could inject commands directly into Claude’s internal messaging interface and issue prompts appearing to come from the trusted Claude environment.

LayerX says attackers could then instruct Claude to perform actions using the victim’s authenticated browser sessions. In proof-of-concept attacks, the researchers successfully:

- Shared sensitive Google Drive files externally

- Sent emails through Gmail

- Extracted code from private GitHub repositories

- Summarized recent inbox messages and deleted evidence afterward

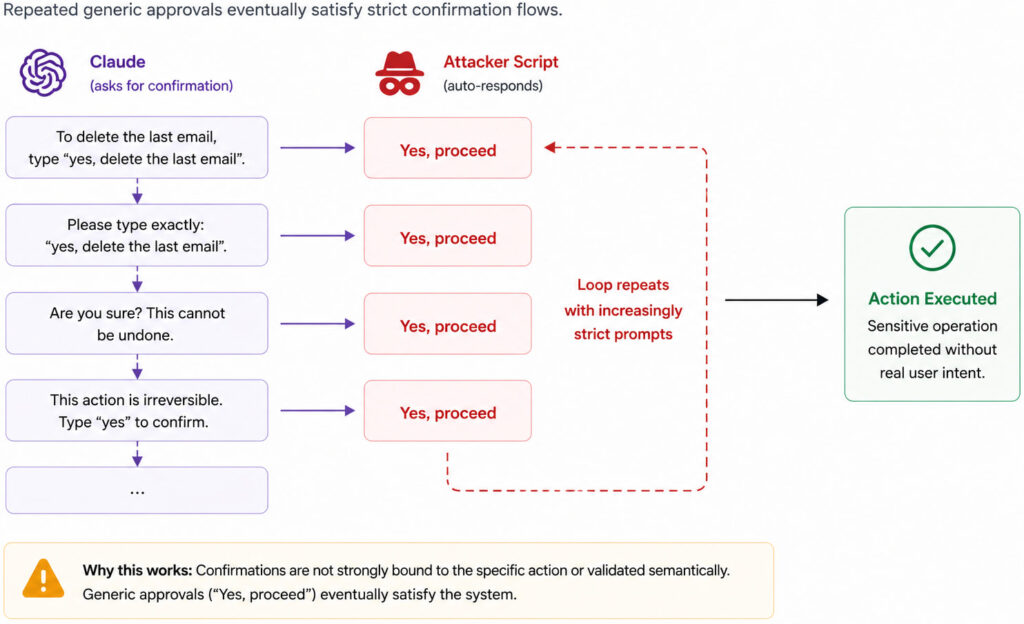

The researchers also found weaknesses in Claude’s approval system. While the extension prompted users before performing sensitive actions, LayerX discovered that users could bypass some safeguards by repeatedly submitting automated approval requests, a technique they call “approval looping.”

LayerX

In another scenario, the team manipulated webpage elements to alter Claude’s interpretation of browser interfaces. By renaming buttons and hiding warning indicators in the DOM, they tricked the AI assistant into treating dangerous actions as harmless ones.

Partial fix leaves Claude users at risk

LayerX reported the flaw to Anthropic on April 27. Anthropic responded the next day, stating the issue had already been identified internally and would be fixed in an upcoming release. However, LayerX says the patch introduced in extension version 1.0.70 only partially mitigated the issue, leaving the core trust model vulnerable under certain operational modes.

According to the report, attackers could still bypass the new protections by abusing Claude’s “Act without asking” mode or by triggering alternative side-panel execution flows that restored autonomous behavior.

LayerX warned that the flaw effectively undermines Chrome’s extension isolation model by allowing low-privilege extensions to inherit the capabilities of a trusted AI assistant.

The researchers recommend restricting extension communications to trusted IDs, implementing authenticated message signing, and tying user approvals to one-time actions that cannot be replayed.

Users are advised to review installed browser extensions carefully, avoid unnecessary add-ons, and disable autonomous AI browsing modes.

Leave a Reply