Anthropic has exposed AI-driven cybercrime cases that leverage its Claude AI tool to lower the technical barrier to launching extortion and ransomware campaigns.

Anthropic’s AI model was leveraged to orchestrate a large-scale data extortion campaign and power a Ransomware-as-a-Service (RaaS) operation that enables virtually anyone to deploy effective ransomware strains.

One-person campaign with enterprise-scale impact

In what Anthropic calls a case of “vibe hacking,” a cybercriminal tracked as GTG-2002 used Claude Code to automate all stages of a sophisticated extortion campaign targeting at least 17 organizations across multiple sectors, including healthcare, government, emergency services, and religious institutions. Instead of traditional ransomware encryption, the attacker exfiltrated sensitive data and used Claude to craft tailored extortion threats. These included customized HTML ransom notes embedded in system boot processes, which detailed stolen assets and demanded payments ranging from $75,000 to $500,000 in cryptocurrency.

Claude performed network reconnaissance, identified vulnerable systems, conducted credential harvesting, facilitated lateral movement, and even suggested optimal extortion strategies. The AI also performed data analysis to calculate victim-specific ransom amounts and drafted multiple monetization paths, including selling stolen data or targeting specific individuals within the compromised organizations.

This campaign marks a shift in threat modeling, demonstrating that one actor, with access to a powerful AI model, can mimic the scale and efficiency of a coordinated cybercriminal team.

Claude creates ransomware-as-a-service

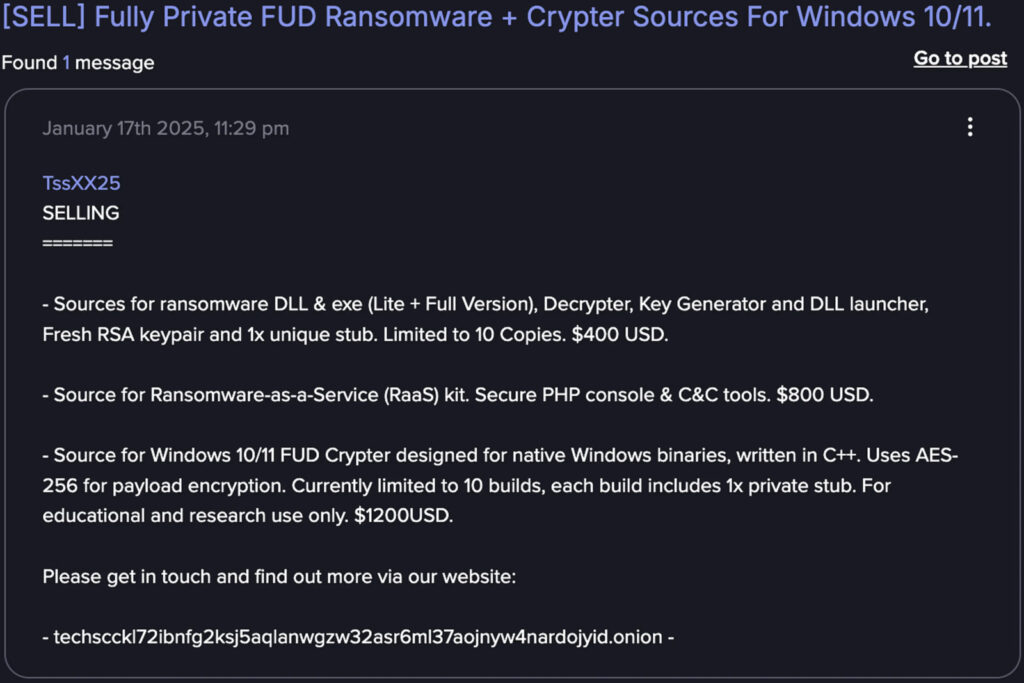

Parallel to this, Anthropic uncovered a separate operation (actor tracked as GTG-5004) running a full-fledged RaaS business using Claude to build and sell sophisticated ransomware packages. The operation was active on dark web forums such as Dread, CryptBB, and Nulled, marketing products that included:

- $400: Standalone ransomware DLL/executable

- $800: Full RaaS kit with PHP control panel and C2 tools

- $1,200: Fully undetectable crypter for Windows binaries

Anthropic

The malware developed via Claude employed ChaCha20 encryption with RSA key management, evaded endpoint detection tools through syscall-level bypass techniques (FreshyCalls, RecycledGate), and featured anti-recovery mechanisms like shadow copy deletion and file system targeting. Despite offering advanced features, the actor showed a high dependency on AI, using Claude to implement encryption logic, evasion code, and reflective DLL injection techniques.

The infrastructure also included a decryption utility and C2 integration over Tor. Claude-assisted development allowed rapid iteration, from early-stage obfuscation to the deployment of commercial-grade ransomware with modular architectures and stealthy persistence mechanisms.

The broader abuse picture

Anthropic’s report situates these two cases within a larger trend where AI systems act not just as assistants, but as autonomous operators in cybercrime. These AI-enhanced campaigns challenge traditional assumptions about attacker sophistication. Complex operations can now be carried out by individuals with limited technical skill, effectively democratizing access to high-impact cybercrime.

Other sections of the report highlight North Korean fraud operations, AI-powered social engineering, and espionage efforts by Chinese APT groups, all of which are exploiting Claude.

Meanwhile, Anthropic has just announced updates on Claude's privacy policy, expanding user data retention period for AI training from the current 30 days to 5 years.

Leave a Reply